Intro

This is a follow-up on my first post on building PC for deep learning.

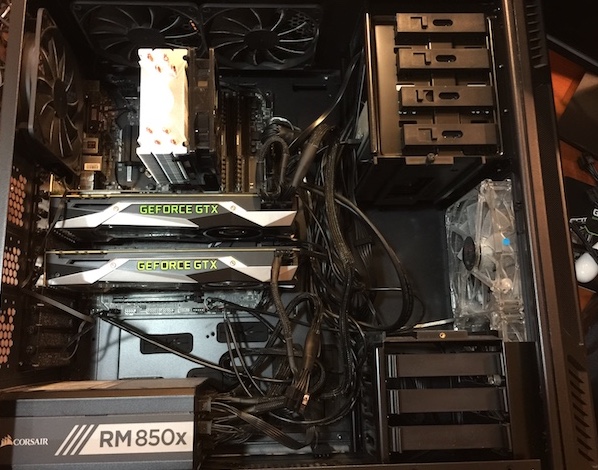

Today, I am adding a second GPU! It’s “GTX 1080 Ti Founder’s Edition”:

However, there are few bummers.

First, the card I already own is GTX 1080 without “Ti”. This means that it is a slightly different GPU (GP104) than 1080Ti (GP102) and therefore, I can not connect them via SLI bridge. I knew about this before but chose to get Ti as a second card anyway because it is a fantastic card for Deep Learning, Gaming, VR and Cryptocurrency mining (more on that later). I am very impressed with it and plan to post few benchmarks soon.

Second, is a potentially a more serious problem - my motherboard and CPU do not support 2 GPUs in PCIEx16 mode. They can only do either 1 PCIEx16 or 2 PCIEx8. Here is my parts list:

Thus, my recommendation is: if you are really serious about doing muti-gpu training (e.g. one model in data or model parallel manner) then it is a good idea to make sure that your motherboard and CPU can provide enough PCIE lanes to utilize the cards’ bandwidth to the fullest.

But when 2x8 is “as good as” 2x16? When training separate models on separate GPUs, and when the compute to data transfer ratio is high. But again, if your budget permits it, get a better motherboard and CPU.

Other than that, everything else went smoothly and my power supply can handle the full load on both cards without issues. Here is my “final” build:

I also got HTC Vive VR headset and either of my cards is enough to play current games in full quality.